Event streaming

Maak data beschikbaar zonder specifieke integraties tussen applicaties met eMagiz

Event streaming bestaat uit twee onderdelen

- Events: Een bron publiceert bepaalde data in de vorm van een ‘event’, waarop andere applicaties zich kunnen abonneren om gebruik te maken van deze data.

- Streaming: De events zijn te allen tijde door de applicaties te bereiken. Hierbij is er geen sprake van een gegarandeerde aflevering of verwerking van het bericht. De bron heeft namelijk geen directe relatie met de applicatie die gebruik wil maken van de data.

Wel weet de bron wie allemaal geabonneerd is op welk event en wie dus alle afnemers van de events zijn.

Veel voorkomende situaties

Event streaming is met name in bedrijfsprocessen waar enorme hoeveelheden data aanwezig is. Event streaming maakt het namelijk mogelijk om al deze beschikbare data ook daadwerkelijk in te zetten om primaire bedrijfsprocessen beter en slimmer te maken en klanttevredenheid te verhogen.

Daarnaast is event streaming een ideale integratie patroon voor het betrouwbaar, asynchroon en flexibel uit wisselen van stamdata of transsectionele data distributie. Voor situaties waarbij applicaties op een bepaald moment in het proces worden afgeroepen en ten alle tijden de beschikbaarheid moeten hebben over de laatste, meest up to date, betrouwbare stamdata om het proces binnen de applicatie in goede banen te leiden.

Streaming proces

Een belangrijk onderdeel van event streaming is het streaming proces waarbij de data die stroomt tussen de systemen wordt verwerkt en geanalyseerd. Hierdoor is het mogelijk om direct te reageren op situaties die voortvloeien uit de real time data inzichten en de gegevens van bijvoorbeeld sensoren in IoT oplossingen. Hiermee creëren bedrijven nieuwe manieren om met hun klanten om te gaan om de klanttevredenheid te verhogen en worden verliezen tegengegaan. Met name omdat veel eerder dan voorheen gereageerd kan worden op verstoringen in het proces.

Versnel event streaming en til integraties naar een hoger level

Waarom kiezen voor event streaming van eMagiz?

Direct reageren op realtime ontvangen data (events)

Transformeer data naar elk gewenst formaat of protocol

Verwerk grote hoeveelheden data, met een beperkte set resources

Ruim ombruikbare data op, zodat dit niet onnodig wordt verwerkt en opgeslagen

Een duidelijk overzicht van het complete integratielandschap

Herken patronen binnen metrics, IoT data en transactie logs, dankzij real-time data

De divisie Cross Border Solutions van PostNL maakt gebruik van eventstreaming vanwege de grote hoeveelheid data, de relatief lage complexiteit en het herhaaldelijk gebruik van de gegenereerde data.

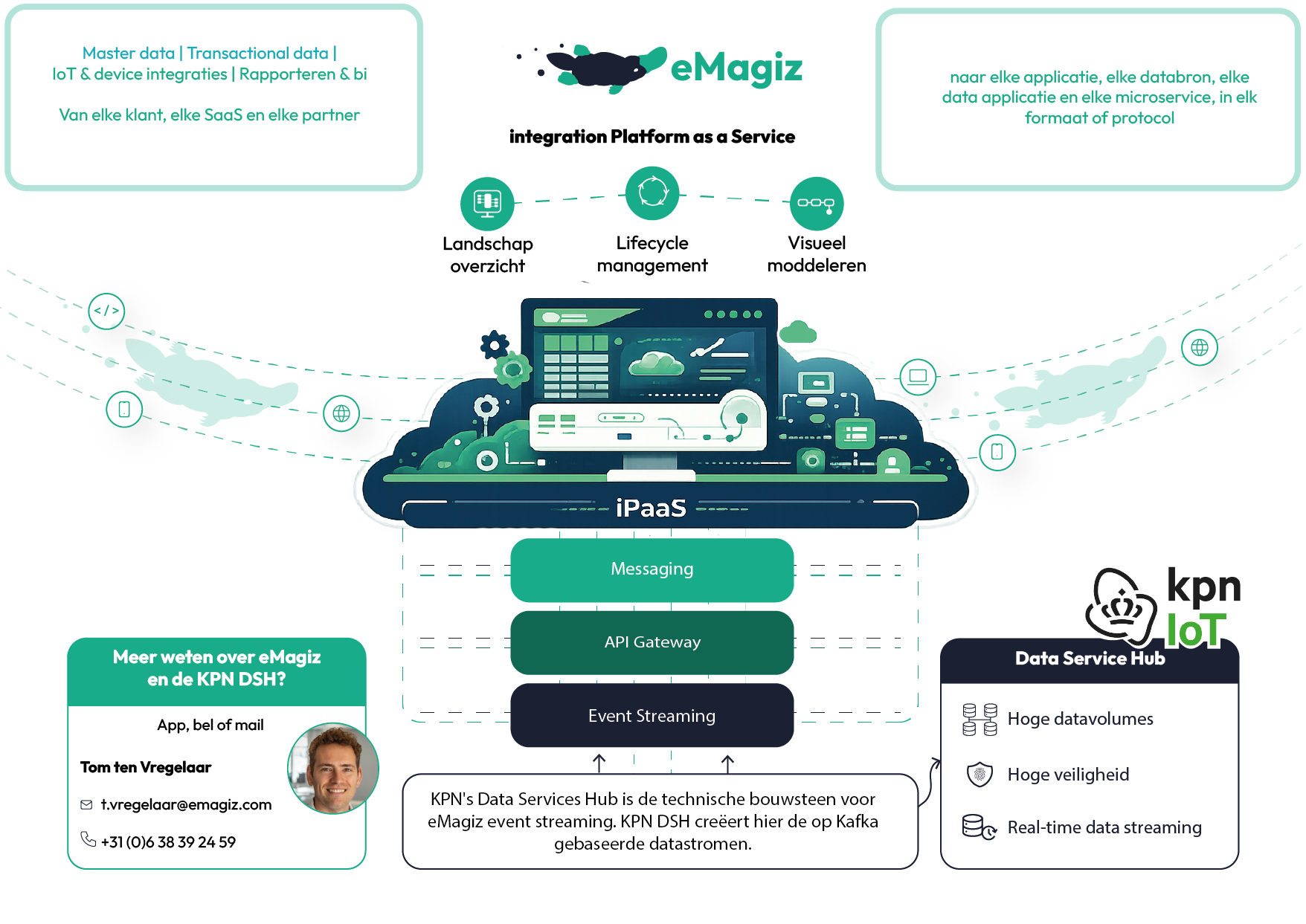

Event streaming technische aspecten

Databeheer moet geen gedoe zijn, maar gewoon veilig en toegankelijk voor iedere organisatie. Om het integratiepatroon voor eventstreaming te ondersteunen en de veiligheid te garanderen, maakt het platform gebruik van moderne technieken zoals ActiveMQ artemis en Apache Kafka, via de infrastructuur van de KPN DSH. Daarom werkt eMagiz, Om het integratie patroon event streaming te faciliteren, samen met de KPN Data Services Hub (DSH). Event streaming vereist een gedistribueerde technische architectuur, die in eMagiz cloud-agnostisch is en zowel in de cloud als on-premises functioneert. Zodra een integratiemodel in het eMagiz-platform is ontwikkeld, wordt dit gedistribueerd geïmplementeerd over de verschillende eMagiz-runtimes (cloud en/of on-premises).

KPN Data Service Hub

Sinds 2025 werkt eMagiz samen met KPN’s Data Services Hub om Kafka-gebaseerde datastromen te ondersteunen binnen de eventstreamingfunctionaliteit. Deze infrastructuur voldoet aan de strenge eisen van eMagiz op het gebied van multi-tenancy en beveiliging. In ruil daarvoor biedt de samenwerking KPN-klanten de mogelijkheid om data-integraties visueel te ontwerpen en, naast datastreaming, andere data-uitwisselingspatronen te benutten binnen één integratieplatform.

Hybride gebruik van integratie patronen

Het eMagiz iPaaS ondersteunt een hybride toepassing van de integratiepatronen Messaging, API Gateway en Event Streaming. Dit zorgt voor een consistente gebruikerservaring en biedt ontwikkelaars één gecentraliseerde interface om mee te werken. Binnen eMagiz maakt het event streaming-patroon het mogelijk om specifieke data beschikbaar te stellen als events, waarop andere applicaties zich kunnen abonneren. Hierdoor hebben die applicaties toegang tot actuele data uit een database precies wanneer ze die nodig hebben, in plaats van afhankelijk te zijn van willekeurige distributiemomenten.

Klaar om event streaming te gebruiken om integraties en nieuwe projecten sneller te realiseren?

Ga met ons of met een van onze partners aan de slag